If Cloud has been the most used word in the last five years, the words that have been buzzing the IT world in the last five months are Digital Transformation

From Wikipedia:

“Digital Transformation (DT or DX) is the adoption of digital technology to transform services and businesses, through replacing non-digital or manual processes with digital processes or replacing older digital technology with newer digital technology”.

Or: Digital Transformation must help companies to be more competitive through the fast deployment of new services always aligned with business needs.

Note 1: Digital transformation is the basket, technologies to be used are the apples, services are the means of transport, shops are clients/customers.

1. Can all the already existing architectures work for Digital Transformation?

- I prefer to answer rebuilding the question with more appropriate words:

2. Does Digital transformation require that data, applications, and services move from and to different architectures?

- Yes, this is a must and It is called Data Mobility

Note 2: Data mobility regards the innovative technologies able to move data and services among different architectures, wherever they are located.

3. Does Data-Mobility mean that the services can be independent of the below Infrastructure?

- Actually, it is not completely true; it means that, despite nowadays there is not a standard language allowing different architecture/infrastructure to talk to each other, the Data-mobility is able to get over this limitation.

4. Is it independent from any vendors?

-

When a standard is released all vendors want to implement it asap because they are sure that these features will improve their revenue. Currently, this standard doesn’t still exist.

Note 3: I think the reason is that there are so many objects to count, analyze, and develop that the economical effort to do it is at the moment not justified

5. Is already there a Ready technology “Data-Mobility”?

The answer could be quite long but, to do short a long story, I wrote the following article that is composed of two main parts:

- Application Layer (Container – Kubernetes)

- Data Layer (Backup, Replica)

Application Layer – Container – Kubernetes

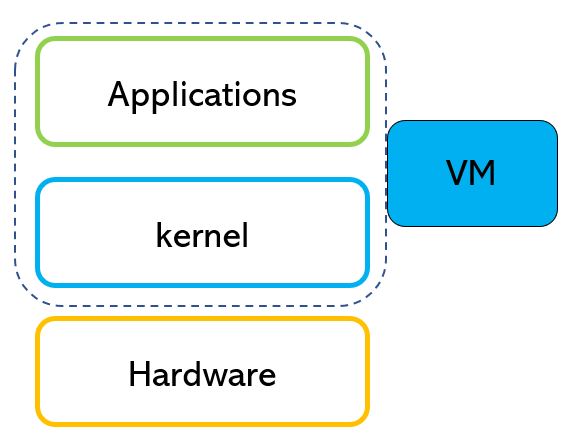

In the modern world, services are running in a virtual environment (VMware, Hyper-V, KVM, etc).

There are still old services that run on legacy architecture (Mainframe, AS400 ….), (old doesn’t mean that they are not updated but just they have a very long story)

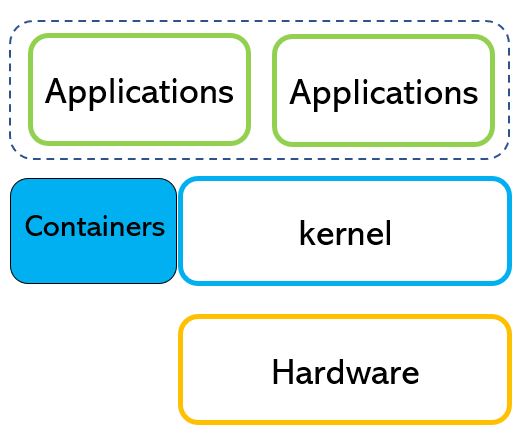

In the next years, the services will be run in a special “area” called “container“.

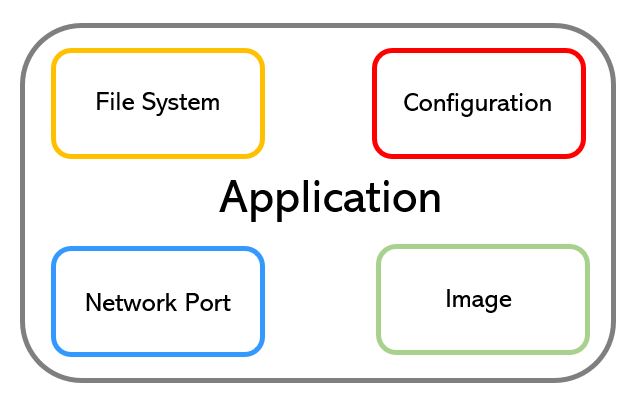

The container runs on Operating System and can be hosted in a virtual/physical/Cloud architecture.

Why containers and skills on them are so required?

There are many reasons and I’m listing them in the next rows.

- The need of IT Managers is to move data among architectures in order to improve resilience and lower costs.

- The Container technology simplifies the developer code writing because it has a standard widely used language.

- The services ran on the container are fast to develop, update and change.

- The container is de facto a new standard that has a great advantage. It gets over the obstacle of missing standards among architectures (private, hybrid, and public Cloud).

A deep dive about point d.

Any company has its own core business and in the majority of cases, it needs IT technology.

Any size of the company?

Yes, just think about your personal use of the mobile phone, maybe to book a table at the restaurant or buying a ticket for a movie. I’m also quite sure it will help us get over the Covid threat.

This is the reason why I’m still thinking that IT is not a “cost” but a way to get more business and money improving efficiency in any company.

Are there specif features to allow data mobility in the Kubernetes environment?

Yes, an example is Kasten K10 because it has many and advanced workload migration features (the topic will be well covered in the next articles).

Data-Layer

What about services that can’t be containerized yet?

Is there a simple way to move data among different architectures?

Yes, that’s possible using copies of the data of VMs, Physical Servers.

In this business scenario, it’s important that the software can create Backup/Replicas wherever the workloads are located.

Is it enough? No, the software must be able to restore data within architectures.

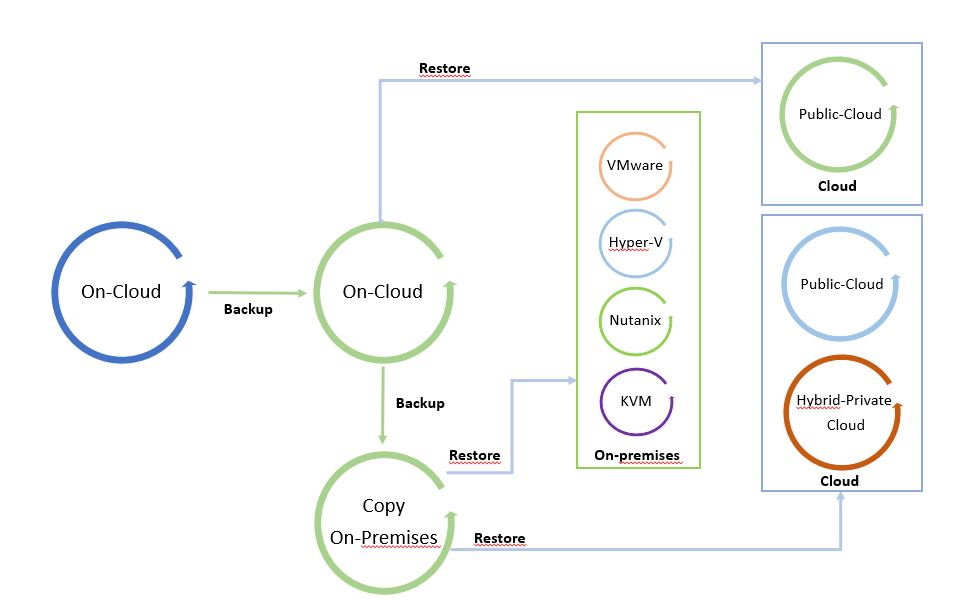

For example, a customer can need to restore some on-premises workloads of his VMware architecture in a public cloud, or restore a backup of a VM located in a public cloud to a Hyper-V on-premises environment.

In other words, working with Backup/Replica and restore in a multi-cloud environment.

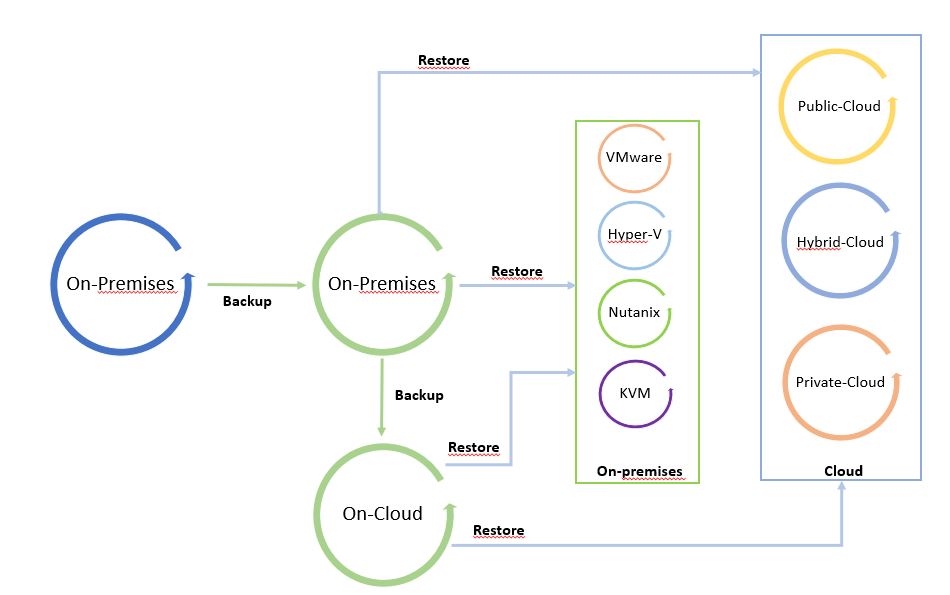

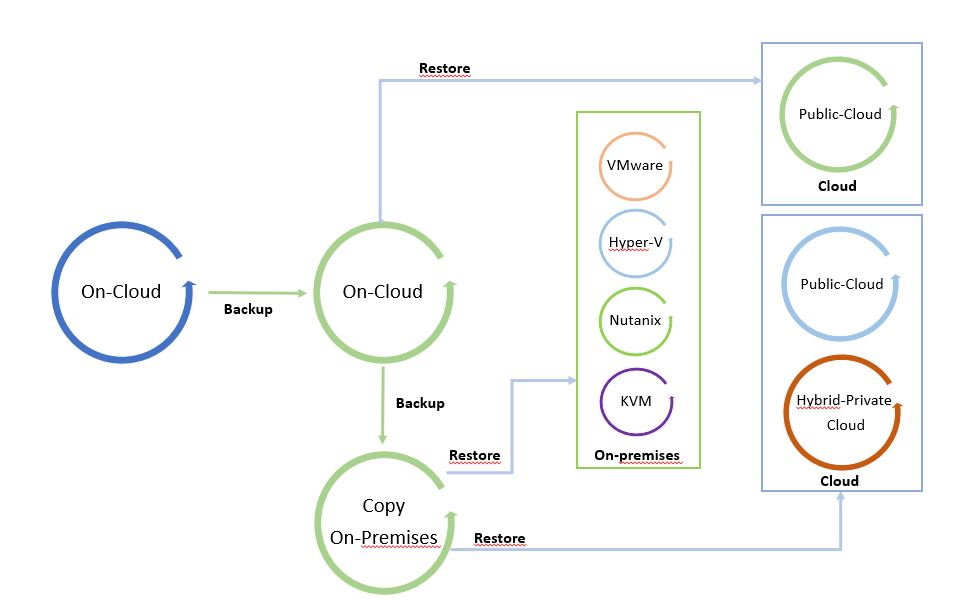

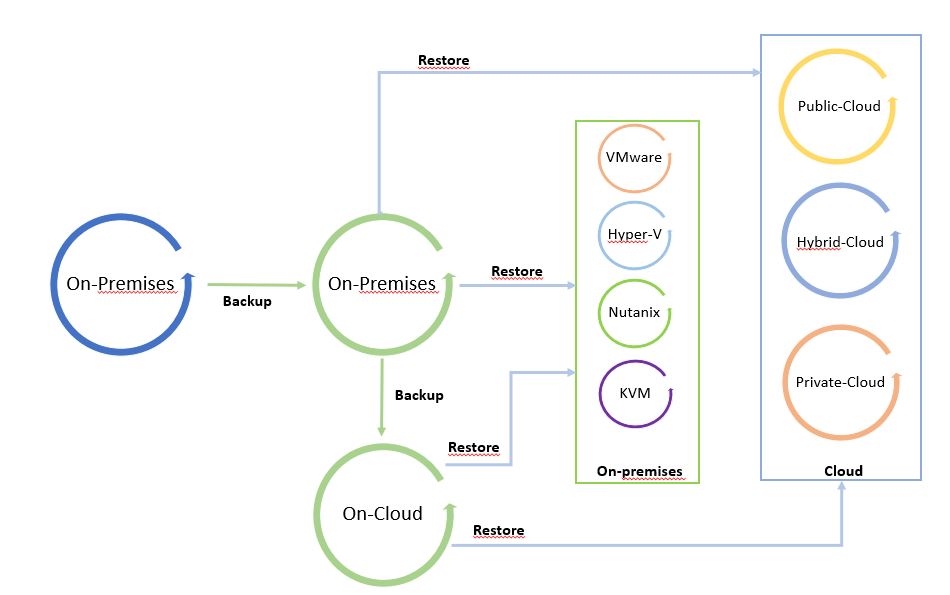

The next pictures show the Data Process.

I called it “The cycle of Data” because leveraging from a copy it is possible to freely move data from and to any Infrastructure (Public, hybrid, private Cloud).

Pictures 1 and 2 are just examples of the data-mobility concept. They can be modified by adding more platforms.

The starting point of Picture 1 is a backup on-premises that can be restored on-premises and on-cloud. Picture 2 shows backup of a public cloud workload restored on cloud or on-premises.

It’s a circle where data can be moved around the platforms.

Note 4: A good suggestion is to use data-mobility architecture to set up a cold disaster recovery site (cold because data used to restore site are backup).

Picture 1

Picture 1

Picture 2

Picture 2

There is one last point to complete this article and that is the Replication features.

Note 5: For Replica I intend the way to create a mirror of the production workload. Comparing to backup, in this scenario the workload can be switched on without any restore operation because it is already written in the language of the host hypervisor.

The main scope of replica technology is to create a hot Disaster Recovery site.

More details about how to orchestrate DR are available on this site at the voice Veeam Availability Orchestrator (Now Veeam Disaster Recovery Orchestrator)

The replica can be developed with three different technologies:

- Lun/Storage replication

- I/O split

- Snapshot based

I’m going to cover those scenarios and Kasten k10 business cases in future articles.

That’s all for today folks.

See you soon, and take care.

Picture 1

Picture 1 Picture 2

Picture 2 Picture 3

Picture 3