The first two articles explained what a container is (article 1) and how they can talk to each other (article 2).

In this third article, I’m going to show how to deploy a service through this new and amazing container technology.

Note 1: I won’t cover the image flow deployment part (Git – Jenkins, Docker repository, and so on) because my goal is to explain how to implement a service, not how to write lines code.

Main Point:

- As many of you already know, a service is a logical group of applications that talk to each other

- Every single application can run as an image

- Any image can run to a container

- Conclusion: Deploying container technology is possible to build up any service

An example could clarify the concept.

Example: Web application

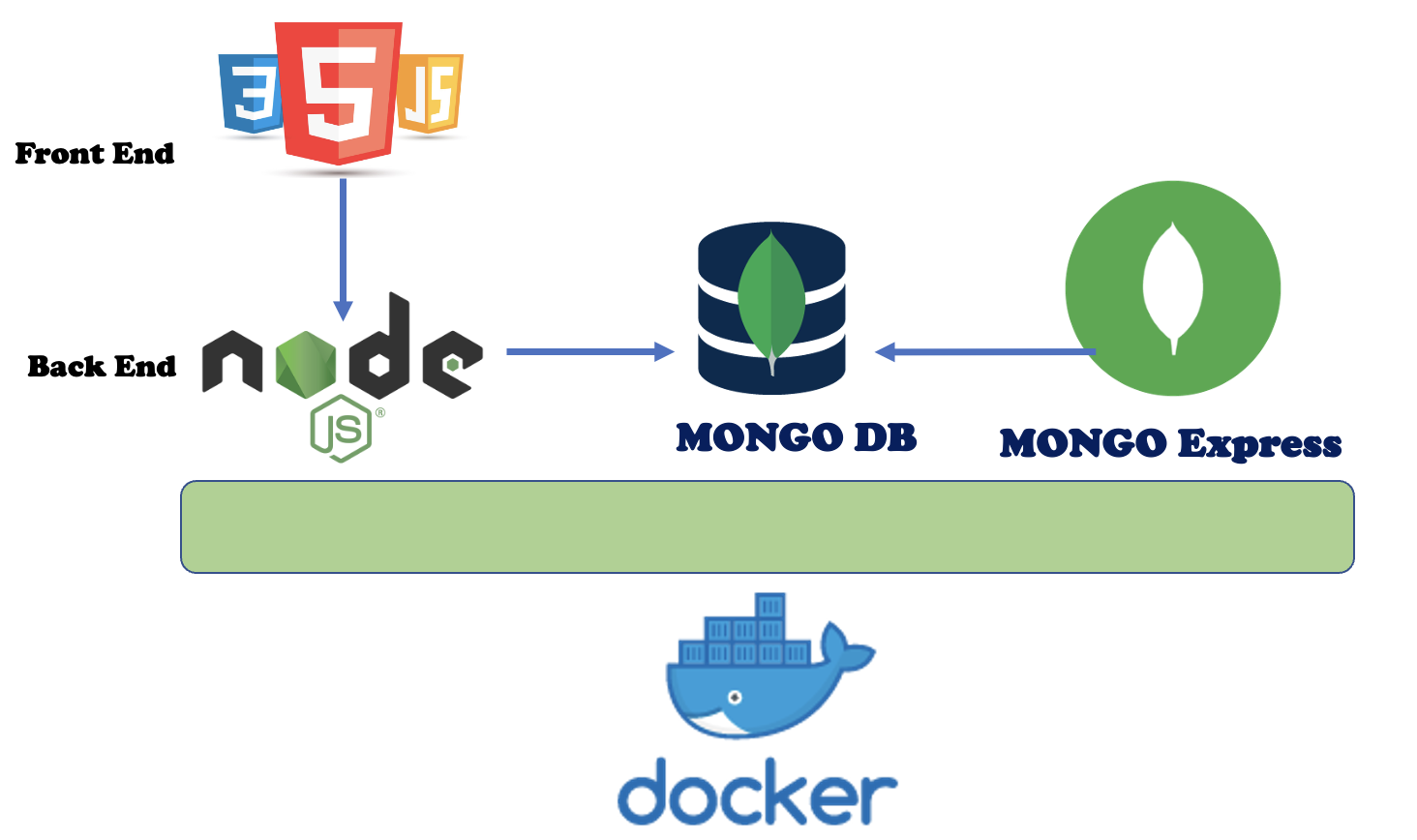

A classical web application is composed of a Front-End, a Back-End, and a DB.

In the traditional world, every single application runs on a single server (virtual or physical it doesn’t matter).

This old scenario required to work with every single brick of the wall. It means that to design correctly the service the deployers and engineers have to pay attention to all the objects of the stack, starting from OS, drivers, networks, firewall, and so on.

Why?

Because they are a separate group of objects that need a compatibility and feasibility study to work properly together and they require great security competencies also.

Furthermore, when the service is deployed and every single application is going to be installed, it often happens that remote support from the developer team is required. The reason is that some deployment steps are not clear enough just because they are not well documented (developers are not as good at writing documentation as they are at writing codes). The result is that opening a ticket to customer service is quite normal.

Someone could object and ask to deploy a service just using one server. Unfortunately, it doesn’t solve the issue, actually, it amplifies it up just because in that scenario, it’s common to meet scalability problems.

Let’s continue our example by talking about the architecture design and the components needed (Picture 1)

- Front End: HTML and JavaScript

- Back End: Node.js: It is a framework, which is used to write server-side Javascript applications (https://nodejs.org/it/)

- DB: Mongo DB (https://hub.docker.com/_/mongo)

- DB Management: Mongo Express: A Web-based MongoDB admin interface (https://hub.docker.com/_/mongo-express)

Picture 1

Picture 1

Note 2: In the next rows, I will skip how to deploy the front and backend architecture as well as the docker technology because:

- Writing HTML and Javascript files for creating a website is quite easy. On Internet, you can find a lot of examples that will meet your needs.

- Node.js is a very powerful open-source product downloadable from the following website where it’s possible to get all the documentation needed to work with it.

- Docker is open-source software; it can also be downloaded from the official open-source website. The installation is a piece of cake.

My focus here is explaining how to deploy and work with docker images. Today’s example is the Mongo DB and Mongo Express applications.

I wrapped up the steps in 4 main stages:

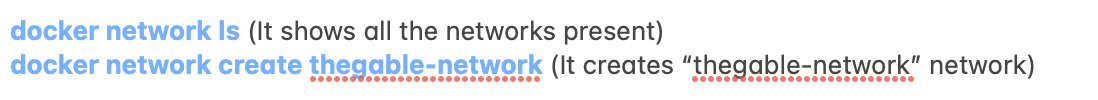

a. First point: Creating a Network

It allows communication from and to the images.

In our example, the network will be named “thegable-network”.

From the console (terminal, putty….) just run the following commands:

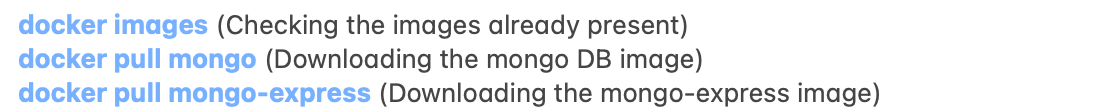

b. Download from docker hub the images needed

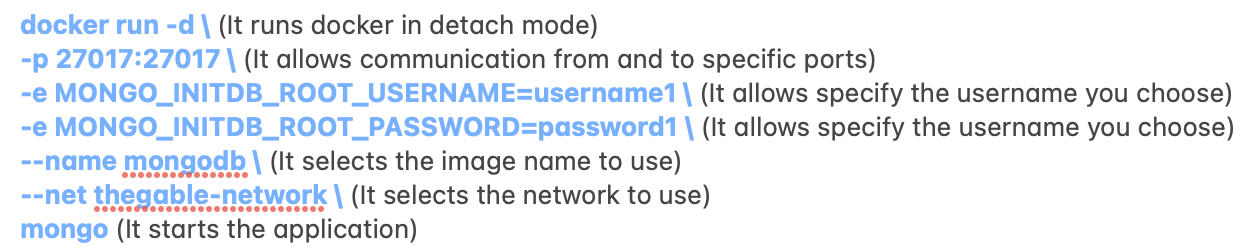

c) Running the mongo DB image with the correct settings:

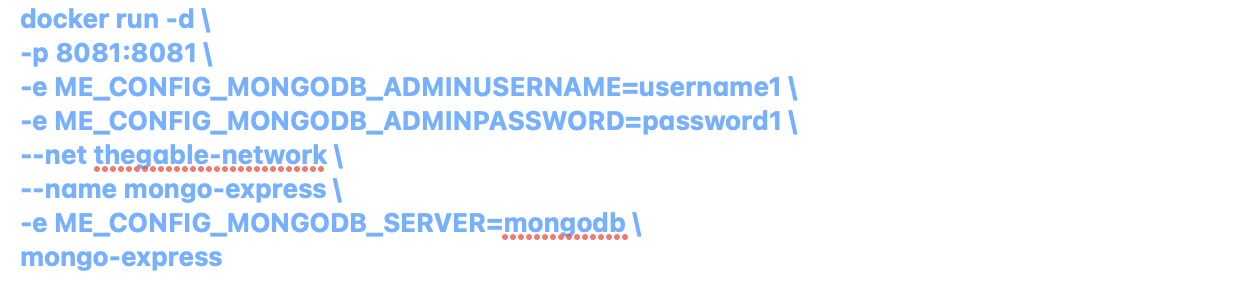

d) Run the mongo-Express image with the correct settings:

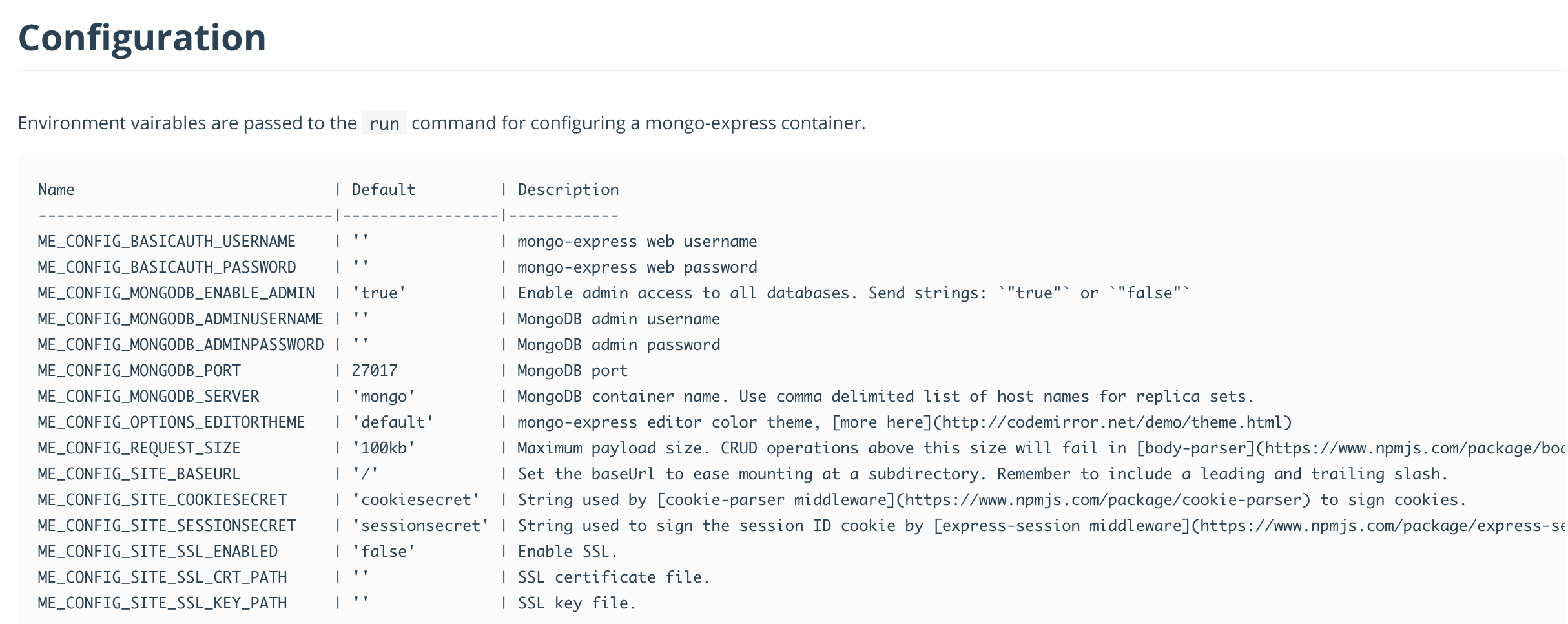

Note 3: The configurable settings are available directly from the docker images.

For example, to mongo-express, picture 2 shows the common settings. (https://hub.docker.com/_/mongo-express)

Picture 2

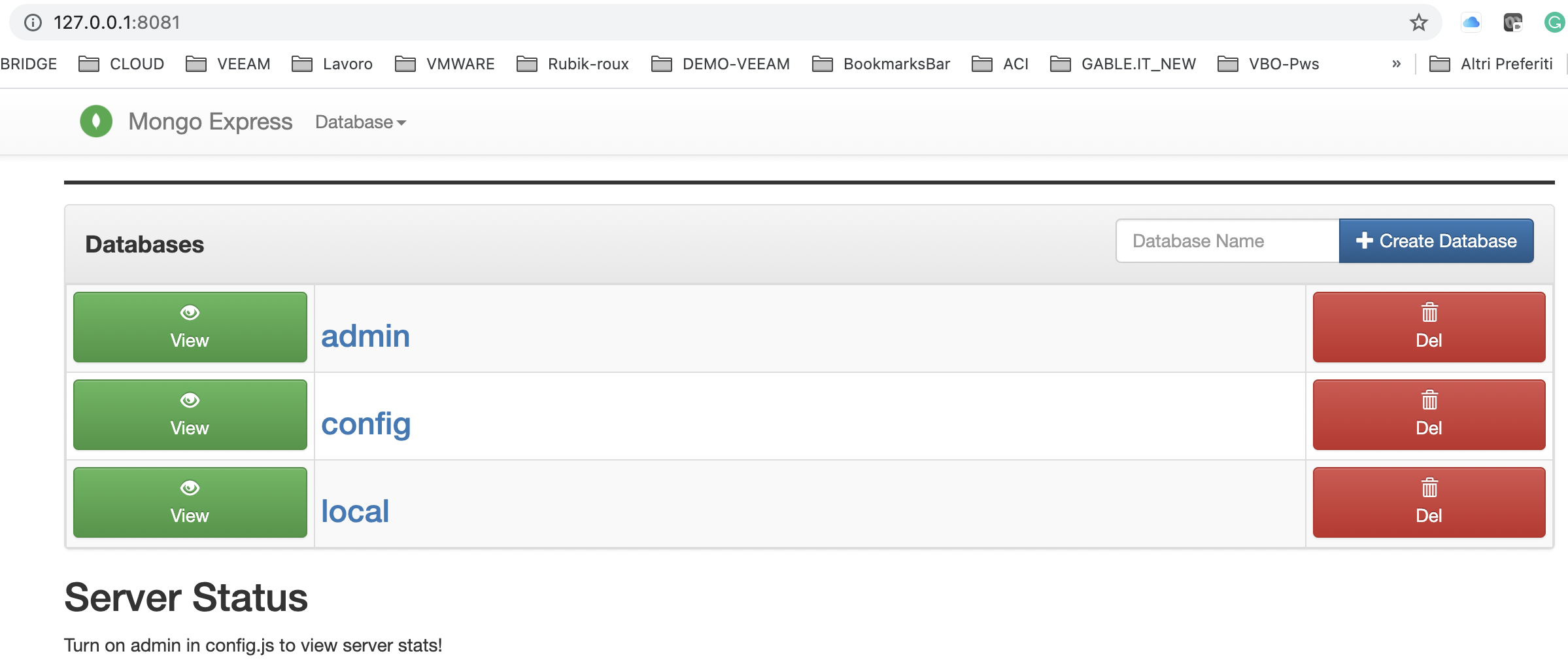

Connecting to the main web page of mongo-express (localhost: port), have to appear the mongo default Databases as shown in picture 3

Picture 2

Now creating new Mongo DBs (through the Mongo-Express web interface just for example create the DB named “my-GPDB“) and managing your javascript file, it’s possible to build up your own web application.

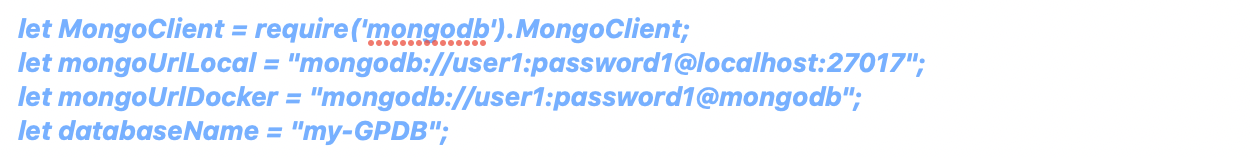

In the javascript file (normally is named server.js) the main points to connect to the DB are:

(Please refer to a javascript specialist to get all details needed)

Is it easy? Yes, and this approach allows having a fast and secure deployment.

In just a word, it is amazing!

That’s all folks for now.

The last article of this first series on modern applications is Docker Compose

See you soon and take care